Traditional approaches to AI in manufacturing end up as costly prototypes with little real-world impact. To achieve scale, a new type of solution is needed.

It’s hard to explain. The manufacturing industry is one of the most natural candidates for driving value from artificial intelligence and machine learning. Manufacturing processes generate high volumes of semi-structured or structured machine data that is ripe for analysis, and every process optimization can lead to clearly quantifiable benefits: better throughput, quality, yield, margins, and less waste and product recalls. But is this what’s happening?

After speaking to dozens of manufacturers in recent years–including in companies that are household names in the pharmaceutical, automotive, and electronics industries–we’ve learned that operationalizing AI at scale is often still more of a plan than a reality. As we shall proceed to show, this is caused by a combination of overreliance on external expertise, lack of adequate tooling, and difficulty in operationalizing data science insights.Let’s take a closer look at the challenges of AI at scale, examine the limitations of current solutions, and explain why we believe Vanti’s self-service adaptive AI provides a better way forward.

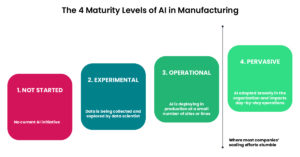

The 4 Levels of AI Maturity in Manufacturing

AI initiatives in manufacturing typically start with the realization that repetitive processes can be automated in order to better utilize human resources and improve operational efficiencies.

Take the example of AI-based techniques to improve defect detection rates: the manufacturer wants to replace post-hoc data analysis with a machine learning algorithm that can crunch sensor data in real-time, with the desired outcome of detecting more defects earlier in the production process. Such a system would alert the worker on the production floor to take action – e.g., remove a part from production before work is expended on it.

But does that plan pan out? In terms of implementation, we can think of four broad categories that most companies’ AI projects fall into:

-

- Not started: No current AI initiative in place, or only vague plans to launch one

- Experimental: Data is being collected and used by data scientists to explore hypothesis and suggest process improvements

- Operational: AI has been deployed at a limited scale in production – such as a proof of concept project running at a single site

- Pervasive: AI has been adopted broadly within the organization, is used across sites and assembly lines, and impacts day-to-day operations

From our experience, many manufacturers fail to reach Level 4, where AI Is deployed broadly, helps generate insights across different manufacturing sites and product lines, and informs day to day decisions on the production floor. Industry data agrees: a Deloitte survey conducted in China found that 91% of AI projects failed to meet expectations.

It’s not uncommon to find that the AI project mostly exists as a slide on the CIO’s annual planning deck (where it’s been since 2018), while factory operations are still managed in spreadsheets or worse. The most common scenario is that AI initiatives start with great fanfare, only to end up stuck between Levels 2 and 3 in the chart above: Systems that are operational in only a handful of sites, and mostly used by data scientists who are looking to draw insights retroactively.

Cases where AI is used by none-specialists to make real-time decisions are vanishingly rare.

Why Current Approaches to AI Don’t Scale

To understand where things go wrong, we need to take a closer look at the ways companies attempt to develop and deploy ML models in production. The two main approaches used today are outsourcing development to specialists, or in-house development using a combination of generalized and domain-specific tooling. Each approach has limitations, which we’ll dive into now.

Reliance on External Experts and Consultants Creates Fragile Solutions at an Untenable Cost

The notorious difficulty of hiring data scientists and data engineers, and the lack of these skill sets within traditional manufacturing organizations, led many companies to look for external solutions: AI service companies or large consultancies who would be hired to build a bespoke system for defect detection, fault prediction, or other process optimizations.

Projects might start with a proof of concept at a specific site or line (which would take months and millions of dollars to develop), with the stated goal of rolling out additional use cases afterwards. In practice, these projects would often produce working prototypes but rarely go into full-scale production. And while each project is different, there is some commonality to the types of problems that arise:

Localized knowledge gaps hinder implementation: Both discrete and process manufacturing branch into highly specialized domains. Manufacturers of medical products, microchips, or car parts operate under different regulatory constraints, supply chains, and acceptable margins of error. From a data perspective, there can be major differences in the way data is pre-processed, collected, and made available for analytical querying. Working with multiple parts vendors adds another level of variability in data and processes.

Any application of AI requires a high level of domain knowledge, as well as awareness of data collection and preparation processes (which can impact the granularity, freshness, or accuracy of the data). Service providers whose claim to fame is in developing AI models often lack the very specific domain knowledge, which can cause downstream issues, from bottlenecks around data cleaning and preprocessing, to integration with local IT systems that might differ between manufacturers and plants.

Dealing with changes due to model and data drift: An AI system might work perfectly ‘out of the box’, but models can lose their predictive power over time due. This could be due to changes in the underlying data (data drift), or degradation in model performance due to changing inputs and outputs (model drift).

Drifts can occur due to changes in the manufacturing environment, updates to data structures, or the introduction of parts from new suppliers. Regardless of the cause, the end result is that the AI system loses its ability to accurately detect or predict faults, delivers less and less value to the business, and thus never reaches large-scale deployment in production.

Not built for manufacturing workers: AI service providers specialize in solving specific problems for technical stakeholders rather than building systems for the factory floor. While these types of implementations can be valuable for retroactive root cause analysis or optimizations, they fail to convey actionable insights in terms that a non-specialist can easily understand and respond to – which in turn means that they do not generate pervasive, real-time impact on operational decisions made by the people on-site.

Eventually, the organization finds itself with a very limited system. It might seem to deliver on the initial scope – e.g., improving defect detection rates by a certain percentage – but it fails to deliver results at scale: it isn’t resilient to changes in the data or in the manufacturing environment, it’s only applicable to specific assembly lines, and it isn’t useful for manufacturing workers. Closing these gaps requires capabilities that might not be within the core competencies of the specialist group that developed the AI solution, leaving the manufacturing organization with a solution that delivers little to no value.

In-House Development with Limited Tooling Results in Lengthy and Complex Implementations

In recent years there has been a proliferation of AI tooling. Companies such as DataRobot offer unified AI platforms that can be used by data scientists working in any domain, shortening time to production by automating the data engineering and ops work required for model training and deployment.

The emergence of said platforms, along with internal data science hiring gaining pace, has allowed some manufacturers to move AI initiatives away from outsourced experts to in-house teams. Staff data scientists would now be tasked with designing and training the AI models, deploying them in production, and assessing their impact and accuracy on an ongoing basis.

Moving the project to in-house teams removes many of the knowledge gaps that existed when relying on external expertise, and allows organizations to see value earlier due to faster development cycles. However, the scaling issues remain due to:

-

- Complex specialized toolset to manage: Working with generalized AI platforms means effort needs to be expended in order to tailor solutions for manufacturing, and then to further tailor those solutions to the requirements of the specific manufacturer, plant, parts vendor or production line. This increases the overall cost and time to value of the implementation.

- Maintenance overhead: Efforts around data collection, monitoring, and model tuning are often underestimated and can end up eating up most of the time spent on the AI initiative. Data scientists’ focus shifts from research and model development to maintaining large-scale platform deployments, hindering further improvements.

- Still no interface for the manufacturing floor: AI platforms are built for data scientists and developers. To translate the results they generate into actionable items that manufacturing workers can use in daily decisions – such as removing a faulty product or changing the settings on a specific machine – the organization needs to build a separate front-end system that would interface with the AI platform and communicate insights in straightforward terms. Creating such an interface requires additional development cycles and teams, further slowing down value generation.

- Integration with local IT systems remains a pain point which data science teams are often ill-equipped to solve, instead adding another layer of technical debt to overstretched IT departments.

And so we find ourselves in the same place: machine learning models that satisfy the requirements for a proof of concept, but fail to become pervasive, and remain limited in scope and impact.

The Vanti Approach and How It’s Different – 4 Principles of Scalable AI

It’s exactly because we saw how hard it is to scale AI in manufacturing – including experiencing many of the problems above in our previous jobs – that we built Vanti as a platform that can provide scalable industrial AI ‘as a service’.

We wanted a solution that can easily be implemented across dozens of production lines spanning multiple sites, provide value from day 1, and continue doing so without becoming the sole focus of a data science group. Hence we designed Vanti around 4 core principles:

- Instant value with no coding: To provide immediate value, the system needs to be able to generate insights without months of custom coding and configuration. With Vanti, you can see the results of predictive models within minutes after uploading a CSV file, and deployment in production is a matter of hours or days with no coding required.

The benefits from every deployment are near-instant and easy to quantify, creating a virtuous circle for business leaders who feel confident in adding scope. At the same time, data science teams are freed from repetitive implementation tasks and can focus on high-impact experimental work.

- Adaptive AI: To address the challenge of manual maintenance and oversight, which often hinder larger roll-outs, Vanti leverages automated machine learning models that are adaptive, self-optimizing, and self-monitoring. They will adjust according to changes in the underlying data structure and distribution, they can alert the users to drift and changes in predictive accuracy, and they can suggest a model that will generate better outcomes. We’ve covered these features in much more depth in our technical whitepaper – read it here.

- Built for the production floor: As we’ve mentioned, many initiatives get stuck when it comes to translating learnings from the data science lab into action items that are applicable to the factory floor. Vanti is built for operations professionals and surfaces insights in terms that are simple to understand and take action on.

- Baked-in manufacturing best practices: Rather than forcing manufacturing organizations to reinvent the wheel using generalized platforms, we’ve built a tool that’s specifically designed for the IT systems, data structures, and use cases that large-scale manufacturers deal with.

As a result of these design choices, Vanti is a system that scales – making predictive analytics a reality for complex industrial use cases in very short timeframes. It allows manufacturing professionals to detect defects earlier and improve yield, and it frees up valuable data science resources to solve larger and more difficult problems.

Summary of the Different Approaches:

| AI consultancies | In-house development with generalized AI platforms | Vanti’s Industrial AI as a Service | |

| Time to first deployment / proof of concept | Months to years. Requires bespoke model training and development | Weeks to months. Requires tailoring and configuring a solution for specific use case | Hours to days.

Built-in integrations and ready-to-use models |

| Time to scale to additional sites or production lines | Years

Different production sites or lines require additional bespoke development work |

Months to years

Implementation and deployment challenges need to be handled amongst other technical debt |

Weeks to months

Self-learning models and native integrations enable deployment in any site where data is collected |

| Ongoing maintenance overhead | Very High

Model and data drift require additional 3rd party development to address |

High

Continuous management of model and data drift |

Low

Self-optimizing, self-monitoring and adaptive algorithms remove the need for manual oversight |

| End user | Data scientists, senior technical stakeholders | Data scientists | Operations professionals |

To learn more about Vanti’s approach to AI in manufacturing:

- Watch a 3 minute demo video.

- Schedule a no-strings-attached call with one of our experts to discuss best practices and learn how Vanti customers are solving challenges in industrial AI.